MMLU

MMLU, short for Massive Multitask Language Understanding, is an evaluation of language understanding capabilities for large models. It is currently one of the most well-known semantic understanding benchmarks for large models, introduced in September 2020 by researchers at UC Berkeley.

About

Overview

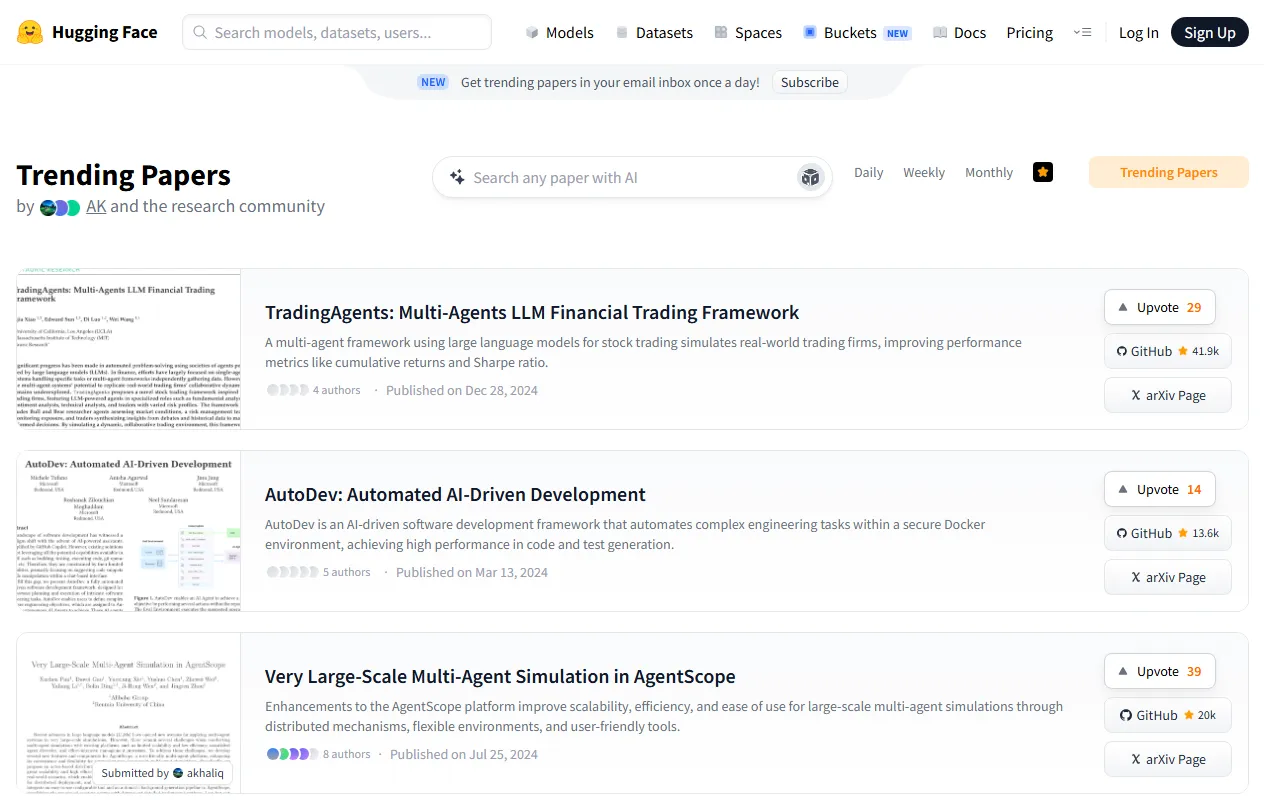

MMLU (Massive Multitask Language Understanding) is a comprehensive benchmark for evaluating knowledge and language understanding in large language models, proposed by researchers at the University of California, Berkeley in 2020. It is currently one of the most frequently cited general capability benchmarks and is often used to compare the performance of different models across multi-disciplinary and multi-task scenarios.

MMLU assesses models' understanding, reasoning, and question-answering abilities across a wide range of knowledge domains through multiple-choice questions in English. Its coverage is broad, including both foundational subjects and specialized fields, so it is often regarded as an important reference indicator for measuring a model's "breadth of knowledge" and "comprehensive understanding ability."

Visit link: https://paperswithcode.com/sota/multi-task-language-understanding-on-mmlu

Key Features

-

Comprehensive multi-disciplinary evaluation

Covers 57 tasks involving elementary mathematics, U.S. history, computer science, law, and many other fields. -

Measures the scope of model knowledge coverage

Used to test whether large models possess broad general knowledge and subject-specific knowledge reserves. -

Evaluates language understanding ability

Uses English questions to assess a model's ability to understand question phrasing, differences between options, and contextual information. -

Supports horizontal model comparison

Since MMLU has become a common benchmark in the industry, the scores of different models can be used for intuitive comparison of overall capabilities. -

Suitable as a general capability reference metric

In academic research and model releases, MMLU is often used as one of the standard tests to demonstrate a model's overall performance.

Pricing

MMLU is essentially an evaluation benchmark, not a standalone commercial SaaS product, so it usually does not have separate product pricing.

If you use the Papers with Code page to view leaderboards and related paper information, it is generally free to access; the specific evaluation cost depends on the model, computing platform, and inference method you use.

FAQ

What does MMLU mainly evaluate?

MMLU mainly evaluates the performance of large language models in multi-domain knowledge question answering, with a focus on knowledge mastery, language understanding, and a certain degree of reasoning ability.

What does MMLU include?

This benchmark includes 57 tasks, covering humanities, social sciences, STEM fields, and some professional exam-style questions, with English as the primary language of the questions.

Is MMLU suitable for judging a model's real capabilities?

It is suitable for measuring a model's overall knowledge and understanding level, but it cannot fully represent a model's actual performance in long-text generation, tool use, multi-turn dialogue, or specific industry scenarios. Therefore, it usually needs to be considered together with other evaluations.

Why is MMLU so common?

Because it has broad coverage, a high citation rate, and is convenient for horizontal comparison, it has become one of the common core evaluation metrics in many large model papers and leaderboards.

Related Tools

This website appears to be empty or inaccessible.

This appears to be a website with no content or that is inaccessible.

This website has no content that can be summarized; it appears to be empty or nonexistent.

The website is empty and has no description.

This appears to be an empty or non-existent website.

The website does not provide enough information to summarize its content.